DeepSeek quietly rolled out what many call “V4-lite”: 1M context, May 2025 cutoff, text-only, with uneven app/API availability.The headline isn’t the name, it’s the attention stack.

Observers say this feels like frontier-level sparse/NSA-style attention, not vanilla block sparsity.Long-context tests suggest the model doesn’t just retrieve - it inhabits context.

On the infra side, MLA + single KV head exposed tensor parallelism inefficiencies.SGLang shipped a fix: DP Attention (zero KV redundancy) + Rust router, claiming +92% throughput and 275% cache hit rate.This ties architecture quirks directly to cluster-level serving costs rare public insight.

Zooming out, DeepSeek’s MoE recipes, MLA, sparse attention, and GRPO are shaping open LLM design.Whether every “first” claim holds or not, the influence is undeniable.

Attention —> not scale —> is becoming the real frontier battleground.

China’s “open model week” continues today Zhipu AI unveiled GLM-5 (aka Pony Alpha). Scaled from 355B → 744B parameters (40B active), with pretraining bumped to 28.5T tokens.

Key upgrade: DeepSeek Sparse Attention, cutting long-context serving costs (200K context, 128K output). Positioned for agentic engineering and long-horizon tasks less flashy, more enterprise-ready.

Strong internal coding scores, SOTA among peers on BrowseComp, top open model on Vending Bench 2.On GDPVal-AA (white-collar benchmark), it edges out Kimi K2.5.

Artificial Analysis ranks it the new leading open-weights model (Intelligence Index 50).

Major hallucination reduction but released in heavy BF16 (~1.5TB): self-hosting isn’t trivial.MIT licensed, with day-0 support across vLLM, SGLang, OpenRouter, Modal, Ollama, and more.

MiniMax dropped M2.5, pushing fast iteration via Agent apps and partner surfaces - openly balancing compute vs ship speed.

StepFun’s Flash-3.5 claims #1 on MathArena, with strong performance per active parameter and high throughput.

Qwen patched Qwen-Image 2.0 (classical poem ordering + editing consistency) and teased Qwen3-Coder-Next (80B) with bold SWE-Bench + 10× throughput claims.

The pattern: fast coding models, tighter loops, aggressive iteration.

But the real wedge is price.

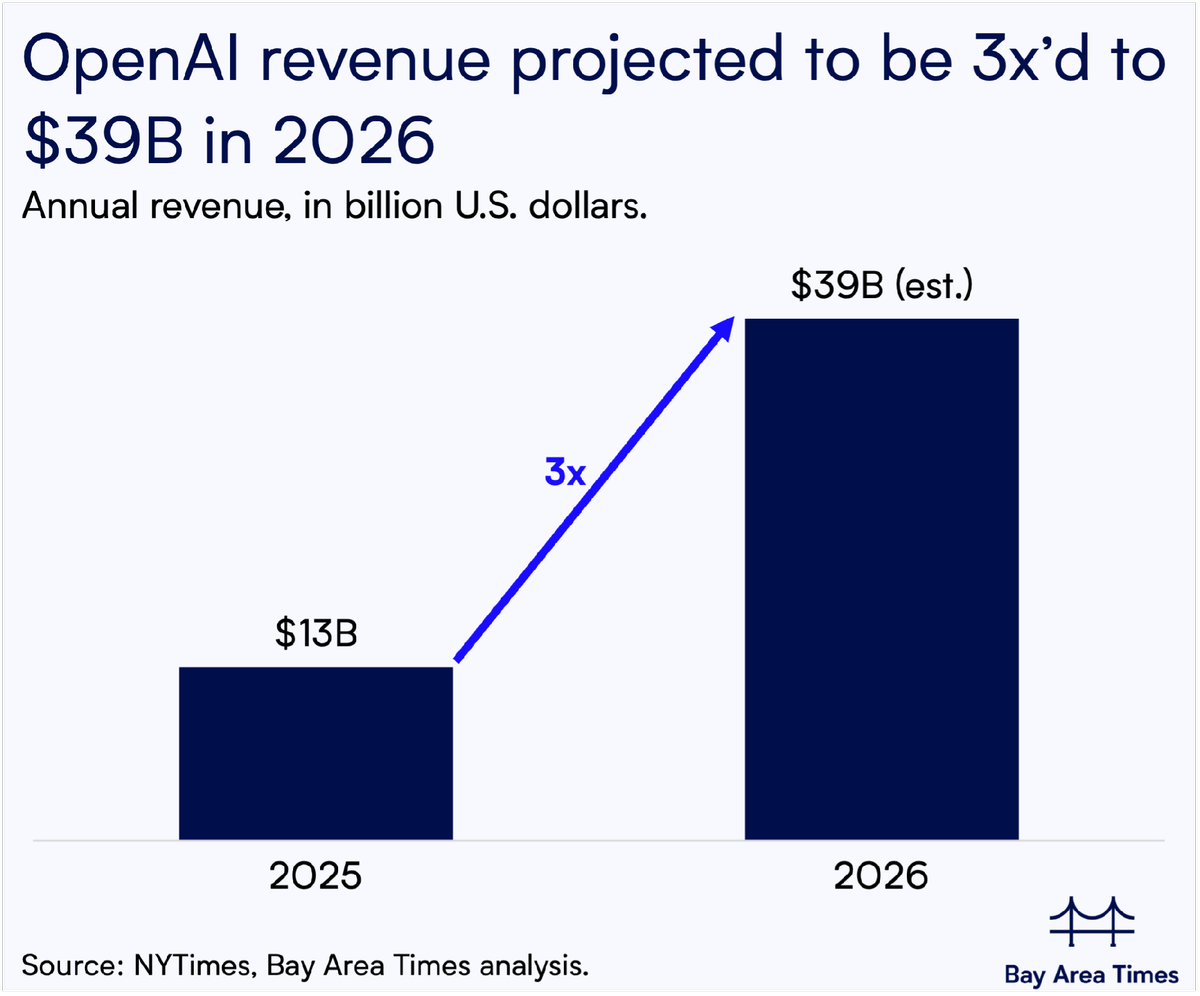

Posters argue Chinese labs are delivering ~90% frontier capability at 1/5–1/10 the cost.GLM-5 pricing and router distribution reinforce the pressure on incumbents.If sustained, coding could be the first category where cost reshapes market share.

Momentum is clear: DeepSeek, MiniMax, GLM : China’s open ecosystem isn’t slowing down.

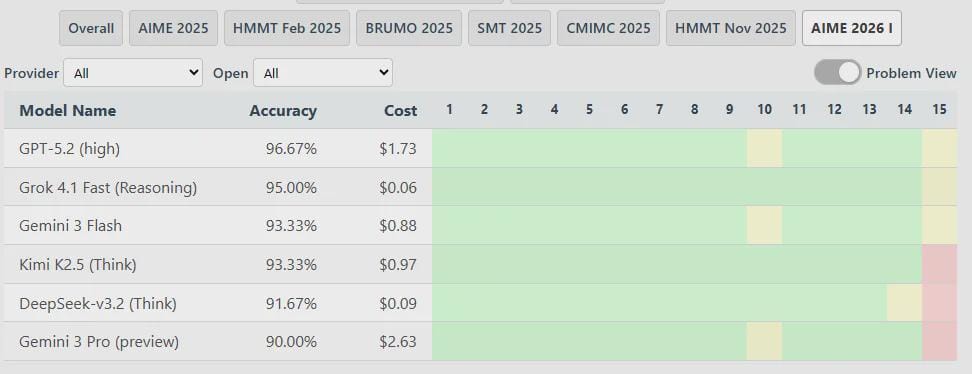

AIME 2026 is out: Kimi K2.5 (93.33%) and DeepSeek-v3.2 (91.67%) lead the open-source pack: competitive, and cost-efficient.On the closed side, Grok 4.1 Fast surprises with strong performance at a low price point.

Only six models were tested, and DeepSeek V3.2 Speciale is missing so take the leaderboard with context.

🌻 Top Tweets

🌻Good Find

Did You Know? A day on Venus is longer than a year on Venus.

Till next time,

Bindu Daily Pulse 🌻

We’re making the Internet of Agents accessible and open for everyone.

![🌻 [AINews] DeepSeek “V4-lite” / 1M context rollout - China’s “open model week” continues.](https://media.beehiiv.com/cdn-cgi/image/fit=scale-down,quality=80,format=auto,onerror=redirect/uploads/asset/file/66f4b0dc-d607-4344-bb5f-ef32188477cf/0_2.jpeg)